Backup and Disaster Recovery for Florida SMBs

Monday opens normally in Orlando. Staff log in, phones ring, patients or clients start arriving, and then one screen shows an encryption notice. Another can’t reach the server. Scheduling stops. Billing stops. Intake stops. If you run a law office, dental practice, CPA firm, architecture studio, or multi-location service business in Central Florida, that moment stops revenue faster than most owners expect.

Florida businesses usually think about storms first. They should. But in practice, I see just as many shutdowns caused by ransomware, failed updates, aging storage, accidental deletion, and power problems that expose weak recovery planning. A backup file sitting somewhere isn’t the same as being able to keep the business operating.

Why Your Florida Business Needs a Real Recovery Plan

An Orlando law firm can survive a bad weather day. It has a much harder time surviving two days without document access, case notes, or billing. A Winter Springs dental office can reschedule around one broken workstation. It can’t function well if imaging, charts, and e-prescribing stay offline through a full patient schedule.

That’s the point business owners miss. Backup protects copies of data. Disaster recovery restores operations, systems, access, and the order everything has to come back online.

What owners usually assume

Most owners I talk to believe they’re covered because someone told them backups are running. That’s a dangerous half-truth. More than 60% of organizations believe they can recover from a downtime event within hours, but only 35% could. Only 40% of technology leaders express confidence that their current backup and recovery solution can sufficiently protect critical assets in a disaster (Spanning).

That gap matters in Central Florida because disruption rarely arrives one problem at a time. A hurricane can trigger power instability, internet issues, office closure, and rushed remote work. A cyberattack can hit on the same week your key employee is out and your vendor is slow to respond.

Practical rule: If your team has never restored the systems you rely on, you don’t have proven recovery. You have hope.

Backup is one piece, not the whole strategy

You still need backups, and business owners should understand the basic types of backup because full, incremental, immutable, local, and cloud copies all play different roles. But none of those choices by themselves answer the hard questions:

- Who restores what first

- How employees work during the outage

- Where your clean copy lives if the office is unavailable

- How long the business can wait

- How you communicate with clients, patients, and vendors

A real recovery plan treats downtime like a business interruption issue, not a server issue. That means deciding in advance what must come back first, who owns each task, and what fallback process keeps money moving while systems are restored.

For Florida SMBs, backup and disaster recovery isn’t a technical add-on. It’s continuity planning for hurricanes, cybercrime, hardware failure, and plain bad luck.

Understanding RTO RPO and Business Impact

A lot of business owners tune out when they hear technical acronyms. Don’t. Two of them decide whether your company closes for an inconvenience or a crisis.

RTO means how long you can be down

Recovery Time Objective, or RTO, is the maximum downtime your business can tolerate before the damage becomes unacceptable. It is comparable to the amount of time your front door can stay locked before the day starts going sideways.

For a medical office, that might mean electronic records and scheduling need to return fast. For a law firm, document management and email may be first. For an accounting office during tax season, the tax platform and file storage move to the top immediately. If you want a plain-English breakdown, this guide to Recovery Time Objectives (RTOs) is useful for non-technical leadership.

RPO means how much data you can afford to lose

Recovery Point Objective, or RPO, is the acceptable amount of lost work between the last good copy and the outage. Think of it as the paperwork gap you’d have to recreate.

If your backup last ran the night before and your server fails at 3 p.m., your business may lose a full day of entries, notes, uploads, or financial activity. Some firms can absorb that. Many can’t.

A dentist may be able to re-enter a few administrative notes. A financial firm may not be able to rebuild the same day’s reconciliations cleanly. A law office may have ethical and operational issues if document versions disappear.

Business impact decides what matters most

Not all systems deserve the same recovery target. That’s where a Business Impact Analysis, or BIA, comes in. It sounds formal, but the exercise is practical. You identify what the business needs to operate, rank those systems, and assign realistic recovery goals.

Start with these questions:

What system stops revenue first

For many SMBs, it’s scheduling, payments, phones, or line-of-business software.What system creates legal or compliance exposure

Client files, patient data, retention systems, and audit records usually land here.What can wait until tomorrow

Archive storage, old project data, and less-used internal systems often belong in a lower tier.

A recovery plan fails when it restores everything slowly instead of restoring the right things first.

Why prioritization matters

Many plans break at this stage. Recent reports show that 40% of business disruptions stem from recovery plans that are not aligned with business priorities. That misalignment is why 68% of SMBs that suffer an outage experience downtime lasting more than a full day (Warren Averett).

Those numbers line up with what happens in the field. Teams restore servers in technical order instead of business order. They bring back file shares before scheduling. They recover archived folders before the application that produces invoices. They restore data but forget the dependency chain, such as identity access, internet failover, VPN access, printing, or vendor-hosted application access.

A simple tier model works better than one big plan

| Business tier | What belongs here | Recovery expectation |

|---|---|---|

| Tier 1 | Systems that stop patient care, client service, billing, or communication | Fastest recovery target |

| Tier 2 | Important operational systems that staff need soon after | Restored after core operations |

| Tier 3 | Archives, historical data, low-use tools | Restored later |

For a Central Florida business, this model keeps you honest. It forces a decision: if the office is dark, internet is unstable, or ransomware hits, what gets your team working again first?

That’s what backup and disaster recovery should answer.

Choosing Your Recovery Architecture On-Prem Cloud or Hybrid

Architecture choices aren’t abstract. They affect recovery speed, cost, maintenance burden, and how much risk you carry if your office loses power or access.

A simple way to think about it is this. On-premise recovery is like owning a generator at your building. Cloud-based recovery is like relying on outside infrastructure to keep operations available elsewhere. Hybrid gives you both a local path for speed and an offsite path for serious disruption.

On-premise recovery

On-premise means your backup storage and much of your recovery capability sit inside your office or under your direct control.

That setup can work well when you need very fast restores of local files, large imaging data, or line-of-business systems that staff access all day. It also appeals to firms that want tighter physical control over hardware.

The trade-off is obvious in Florida. If the building has a power event, flood issue, fire, theft, or network equipment failure, the recovery environment may be affected by the same incident as production.

On-premise works best when:

- You need fast local restores for large files or busy production systems

- You have in-house IT capability to monitor hardware, storage health, patching, and backup jobs

- You also keep protected offsite copies so a building-level incident doesn’t take out everything

Cloud recovery and DRaaS

Cloud-based recovery, often delivered as Disaster Recovery as a Service, shifts recovery infrastructure offsite. That can be a strong fit for firms with multiple locations, hybrid work, or limited appetite for maintaining local recovery hardware.

The biggest strength is geographic separation. If your Winter Springs office is unavailable, you still have a path to restore systems elsewhere. The biggest limitation is dependency on provider design, internet performance, and the quality of the failover plan.

Cloud recovery is often a practical option for SMBs that want operational simplicity. It’s also worth reviewing broader cloud disaster recovery options if you’re comparing hosted failover, cloud backups, and full recovery environments.

Cloud recovery protects you from local events better than local-only recovery. It doesn’t remove the need to plan users, access, sequencing, and vendor dependencies.

Hybrid recovery

For many Central Florida SMBs, hybrid is the most sensible architecture. You keep a local recovery path for quick restores and an offsite copy or standby environment for real disaster scenarios.

That matters when you have two very different recovery jobs:

- restoring a deleted folder quickly for a staff member

- keeping the business alive when the office, server, or network is down

Hybrid designs also fit regulated environments well. A medical practice may need fast file-level recovery during normal operations, but also an offsite path for continuity if the local environment is compromised.

On-Premise vs. Cloud vs. Hybrid Recovery Architectures

| Attribute | On-Premise | Cloud (DRaaS) | Hybrid |

|---|---|---|---|

| Control | Highest direct hardware control | Lower direct control, provider-managed components | Shared control |

| Local restore speed | Often strong for local workloads | Depends on bandwidth and design | Strong for priority local restores |

| Resilience to office-level disaster | Weak unless paired with offsite copy | Stronger for geographic separation | Strongest balance for most SMBs |

| Maintenance burden | Highest | Lower internal burden | Moderate |

| Complexity | Lower if environment is simple | Moderate, depends on provider | Highest if poorly designed |

| Best fit | Firms with strong IT ownership and local performance needs | Firms that want offsite resilience and simpler operations | Firms that need both speed and broader continuity |

What works and what doesn’t

What works is choosing architecture based on business operations.

A law office with heavy document use may need fast local recovery plus offsite failover. A dental group with imaging, scheduling, and compliance concerns often benefits from hybrid. A smaller accounting firm with cloud-first apps may lean more heavily on DRaaS if access control and restore testing are solid.

What doesn’t work is buying storage first and asking business questions later. It also doesn’t work to put every workload in one basket, whether that basket is a closet server or a single cloud platform.

Use architecture to support the recovery order you already defined. Not the other way around.

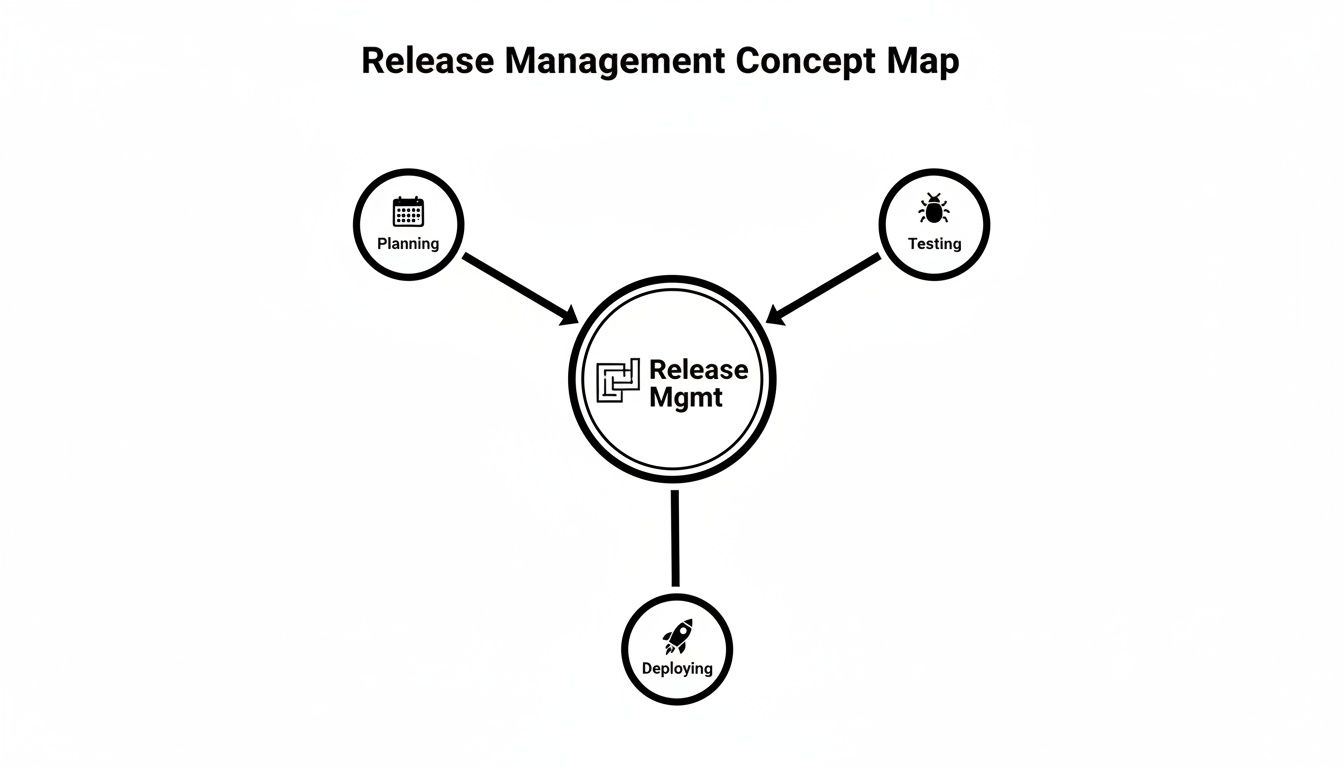

How to Create a Practical Disaster Recovery Policy

A disaster recovery policy should be short enough to use under stress and detailed enough that your team doesn’t guess. If it reads like a generic compliance template, it won’t help when your office is dealing with a ransomware screen, failed storage array, or building outage.

The policy has one job. Tell people exactly what to do, in what order, with what authority.

Put the business inventory first

Start with a clean inventory of what matters:

- Core applications such as practice management, document management, accounting, scheduling, and email

- Infrastructure dependencies such as servers, cloud tenants, firewalls, switches, identity platforms, and internet circuits

- Data locations including laptops, local servers, SaaS platforms, cloud drives, and line-of-business vendors

- Critical vendors whose systems your team can’t operate without

Most weak plans fail here. They list “server outage” as an event but never identify the applications and dependencies attached to that server.

Assign roles before you need them

During an outage, confusion wastes more time than bad hardware. Your policy should name who makes decisions and who executes tasks.

A practical small-business structure usually includes:

| Role | Responsibility |

|---|---|

| Business owner or executive | Declares business impact and approves major recovery decisions |

| IT lead or managed provider | Runs technical recovery steps and escalation |

| Department manager | Validates business function after restore |

| Communications owner | Notifies staff, clients, patients, and vendors |

| Compliance or privacy contact | Reviews obligations involving sensitive data |

Write names, alternates, phone numbers, and non-email contact methods into the document. If email is down, an email-only contact list is useless.

Build the checklist in recovery order

Your runbook should follow the order of operations, not the order equipment appears in a rack.

A practical checklist often looks like this:

Contain the problem

Is this ransomware, hardware failure, accidental deletion, or site outage? Isolation may matter before restoration begins.Declare the recovery mode

Are you restoring files, failing over a server, or shifting staff to remote work?Restore Tier 1 systems first

Focus on systems that keep patient care, client communication, billing, or scheduling moving.Validate access with real users

A server being “up” doesn’t mean the front desk can print, the attorney can open a file, or the accountant can post transactions.Document what changed

Track restored versions, temporary workarounds, and any security concerns discovered during recovery.

A good policy doesn’t try to predict every failure. It gives your team a clear chain of command and a repeatable decision path.

Tailor the policy to your industry

A generic plan won’t satisfy the operational realities of regulated businesses.

For healthcare practices, the requirement is more specific. HIPAA mandates a documented Contingency Plan with specific RTO and RPO targets, and expert benchmarks show that deploying a hybrid solution with automated verification can reduce effective RTO by up to 80% (Accountable HQ). That matters in real clinical workflows where scheduling, chart access, and e-prescribing can’t stay down long without affecting care.

For law firms, the policy should address client confidentiality during emergency access, remote work controls, and how ethical walls remain enforced if normal systems are unavailable.

For accounting and financial firms, document retention, access controls, and audit trail preservation should be explicit. Recovery isn’t complete if the data returns without the records needed to prove integrity.

Include the communication script

Most businesses focus on systems and forget people. Your policy should include prewritten templates for:

- Internal staff updates

- Client or patient notifications

- Vendor escalation requests

- Public-facing service disruption messages

Short, calm, and factual beats long and vague. During a recovery event, people need to know what’s affected, what to do next, and when the next update arrives.

Validating Your Plan Before Disaster Strikes

A backup and disaster recovery plan that nobody has tested will fail at the worst possible time. Not because the idea was bad, but because reality always exposes missing permissions, broken dependencies, expired credentials, and undocumented shortcuts.

That’s why validation matters more than how polished the document looks.

The testing gap is real

The numbers here are ugly. 71% of organizations perform no failover testing to ensure their outage prevention protocols work, 62% fail to conduct regular system backup and restoration exercises, and 25% have no controls in place to prevent malicious access to their backup infrastructure (Secureframe).

That combination is exactly what attackers want. If backups aren’t tested and backup systems aren’t protected, recovery can fail twice. First during the attack, then again during the attempted restore.

Testing doesn’t have to shut down your office

Owners often resist testing because they assume it means a painful all-day outage. It doesn’t.

Use layers of validation:

Tabletop exercise

Leadership and operations staff walk through a realistic outage scenario and identify decision gaps.File-level restore test

Restore selected files or folders to confirm backup integrity and permissions.Application recovery test

Recover a non-production instance of a key application and verify staff can use it.Failover simulation

Conduct an after-hours or planned test of the broader recovery path.

A useful resource on structuring those exercises is this guide to disaster recovery testing.

Untested recovery plans usually fail on the small details. Service accounts, application sequence, printer mapping, remote access, line-of-business licensing, and user validation.

What to verify each time

Don’t treat testing like a box-checking exercise. Validate outcomes that matter to the business:

| Test area | What to confirm |

|---|---|

| Data integrity | Files open, databases mount, and restored records are usable |

| Access control | Correct users can log in and unauthorized access remains blocked |

| Dependency chain | Authentication, networking, storage, and application sequence work together |

| Communication | Staff know who declares the event and where updates come from |

| Recovery timing | Actual restore time is compared to your target |

The best tests create evidence. Save screenshots, timestamps, notes on what failed, and the actions needed to fix it. That turns testing into operational improvement instead of annual theater.

For Central Florida firms, I recommend tying tests to seasonal risk and business cycles. Don’t run your only meaningful exercise when everyone is already overloaded.

Evaluating DR Vendors and Managed Services

Most SMBs shouldn’t try to run mature backup and disaster recovery alone. The issue isn’t intelligence. It’s bandwidth, specialization, and the fact that recovery depends on constant maintenance that owners and office managers rarely have time to supervise.

The right vendor isn’t just selling storage. They’re taking responsibility for design assumptions, monitoring, recovery sequence, testing discipline, and security controls around the backup environment itself.

Ask operational questions, not marketing questions

Don’t start with “How much storage do we get?” Start with the questions that expose whether the provider understands business continuity.

Ask things like:

- What is your process when recovery starts at 2 a.m. on a weekend

- Who validates the restore with our staff

- How do you protect backup systems from unauthorized access

- How often do you require restore testing

- How do you handle SaaS data, local servers, and cloud workloads differently

- What dependencies do you map before declaring a plan complete

- How do you support firms in regulated fields like healthcare, finance, or legal

A serious provider should answer in operational detail, not generic promises.

Look for evidence of process maturity

You want proof that the vendor runs repeatable systems. That includes documented runbooks, named escalation paths, monitoring, reporting, and regular review meetings.

A vendor should be able to explain:

| Evaluation area | What good looks like |

|---|---|

| Monitoring | Backup jobs, storage health, failures, and unusual activity are actively reviewed |

| Security | Backup infrastructure is segmented, access is restricted, and changes are auditable |

| Testing | Restores and failover exercises happen on a schedule, not only after incidents |

| Communication | Clear contacts, escalation rules, and client-facing status updates exist |

| Fit | The vendor understands your industry workflow, not just generic infrastructure |

Regional experience matters in Florida

Ask directly how the provider handles hurricanes, office closures, generator limitations, internet instability, and remote work surges. A vendor can be technically capable and still unprepared for how Central Florida businesses operate during a regional event.

If you’re comparing managed options, review providers that specialize in disaster recovery as a service companies and compare them on process depth, not brochure language.

One option in this category is Cyber Command, LLC, which provides managed backup and disaster recovery, monitoring, failover planning, and SOC-backed security support as part of broader managed IT and cybersecurity services. That kind of bundled model can make sense when your recovery plan depends on helpdesk, endpoint protection, vendor management, and incident response all working together.

The wrong vendor gives you backup status emails. The right vendor shows you how the business will run when systems fail.

Warning signs

Walk away if a provider can’t explain testing cadence, can’t define recovery order, or treats compliance as somebody else’s problem. Also be cautious if every answer points back to a single product. Good recovery design is about process and fit, not just platform branding.

Your Actionable Disaster Recovery Checklist

If you’re a busy owner in Orlando, Winter Springs, or anywhere in Central Florida, start here. Don’t wait for the perfect project plan.

Print this and work through it

List your three most critical business applications

Pick the systems that stop revenue, service delivery, or compliance first.Set a downtime limit for each one

Decide how long each system can be unavailable before the business is in trouble.Decide how much recent work you can afford to lose

Be honest. For some systems, even a small data gap creates operational pain.Inventory where your data lives

Include local servers, cloud apps, Microsoft 365 or Google Workspace data, laptops, shared drives, and vendor platforms.Map dependencies

Note what each critical system needs to function, such as internet, identity access, printers, phones, or third-party software.Confirm you have both backup and a recovery process

A copy of data is not the same thing as a working restoration sequence.Review who does what during an outage

Name decision-makers, technical responders, department validators, and communications contacts.Protect the backup environment

Limit access, review permissions, and make sure the recovery platform isn’t exposed to the same risk as production.Schedule your first test

Start with a tabletop exercise, then move to a controlled restore test.Review the plan on a calendar

Update it when systems change, staff leave, offices move, or vendors change.

A workable backup and disaster recovery program starts with clarity, not complexity.

Frequently Asked Questions About Disaster Recovery

What’s a realistic monthly budget for managed DR for a 20-person company in Florida

There isn’t one honest flat number that fits every business. Cost depends on how many systems you need to protect, how fast you need them back, whether you need local and cloud recovery, compliance requirements, and how much testing and vendor coordination is included. A small office with mostly SaaS apps will look different from a medical or legal practice with local systems and larger files.

How does a good DR plan help with HIPAA or financial compliance

It creates documented recovery procedures, access control expectations, testing evidence, and defined responsibilities. Auditors and assessors usually care less about buzzwords and more about whether you can show that sensitive systems and data can be restored in a controlled, documented way.

Why can’t I just use Dropbox or Google Drive as my backup

File sync isn’t the same as backup and disaster recovery. Sync tools are useful for collaboration, but they don’t replace versioned backup strategy, application-aware recovery, recovery sequencing, security controls, or tested failover planning. If bad data syncs, deletion syncs, or ransomware-encrypted files sync, you may just spread the problem faster.

If your business in Orlando, Winter Springs, or the broader Central Florida area needs a practical backup and disaster recovery plan, Cyber Command, LLC can help you evaluate your current gaps, define realistic recovery priorities, and build a managed approach that supports uptime, security, and compliance without turning recovery into a guess during an actual outage.